Scalable Networks

Summary

This topic explain considerations for designing a scalable network. Start learning CCNA 200-301 for free right now!!

Table of Contents

Design for Scalability

You understand that your network is going to change. Its number of users will likely increase, they may be found anywhere, and they will be using a wide variety of devices. Your network must be able to change along with its users. Scalability is the term for a network that can grow without losing availability and reliability.

To support a large, medium or small network, the network designer must develop a strategy to enable the network to be available and to scale effectively and easily. Included in a basic network design strategy are the following recommendations:

- Use expandable, modular equipment, or clustered devices that can be easily upgraded to increase capabilities. Device modules can be added to the existing equipment to support new features and devices without requiring major equipment upgrades. Some devices can be integrated in a cluster to act as one device to simplify management and configuration.

- Design a hierarchical network to include modules that can be added, upgraded, and modified, as necessary, without affecting the design of the other functional areas of the network. For example, creating a separate access layer that can be expanded without affecting the distribution and core layers of the campus network.

- Create an IPv4 and IPv6 address strategy that is hierarchical. Careful address planning eliminates the need to re-address the network to support additional users and services.

- Choose routers or multilayer switches to limit broadcasts and filter other undesirable traffic from the network. Use Layer 3 devices to filter and reduce traffic to the network core.

Plan for Redundancy

For many organizations, the availability of the network is essential to supporting business needs. Redundancy is an important part of network design. It can prevent disruption of network services by minimizing the possibility of a single point of failure. One method of implementing redundancy is by installing duplicate equipment and providing failover services for critical devices.

Another method of implementing redundancy is redundant paths, as shown in the figure above. Redundant paths offer alternate physical paths for data to traverse the network. Redundant paths in a switched network support high availability. However, due to the operation of switches, redundant paths in a switched Ethernet network may cause logical Layer 2 loops. For this reason, Spanning Tree Protocol (STP) is required.

STP eliminates Layer 2 loops when redundant links are used between switches. It does this by providing a mechanism for disabling redundant paths in a switched network until the path is necessary, such as when a failure occurs. STP is an open standard protocol, used in a switched environment to create a loop-free logical topology.

Using Layer 3 in the backbone is another way to implement redundancy without the need for STP at Layer 2. Layer 3 also provides best path selection and faster convergence during failover.

Reduce Failure Domain Size

A well-designed network not only controls traffic, but also limits the size of failure domains. A failure domain is the area of a network that is impacted when a critical device or network service experiences problems.

The function of the device that initially fails determines the impact of a failure domain. For example, a malfunctioning switch on a network segment normally affects only the hosts on that segment. However, if the router that connects this segment to others fails, the impact is much greater.

The use of redundant links and reliable enterprise-class equipment minimize the chance of disruption in a network. Smaller failure domains reduce the impact of a failure on company productivity. They also simplify the troubleshooting process, thereby, shortening the downtime for all users.

Limiting the Size of Failure Domains

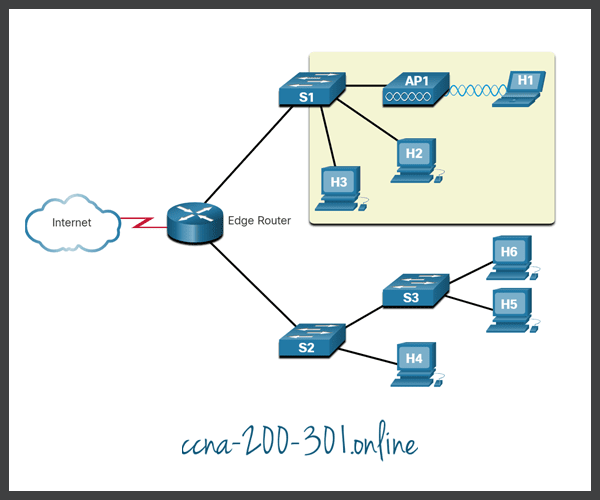

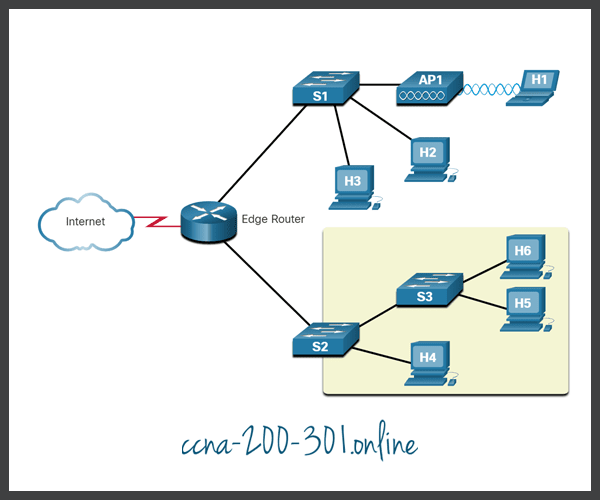

Because a failure at the core layer of a network can have a potentially large impact, the network designer often concentrates on efforts to prevent failures. These efforts can greatly increase the cost of implementing the network. In the hierarchical design model, it is easiest and usually least expensive to control the size of a failure domain in the distribution layer. In the distribution layer, network errors can be contained to a smaller area; thus, affecting fewer users. When using Layer 3 devices at the distribution layer, every router functions as a gateway for a limited number of access layer users.

Switch Block Deployment

Routers, or multilayer switches, are usually deployed in pairs, with access layer switches evenly divided between them. This configuration is referred to as a building, or departmental, switch block. Each switch block acts independently of the others. As a result, the failure of a single device does not cause the network to go down. Even the failure of an entire switch block does not affect a significant number of end users.

Increase Bandwidth

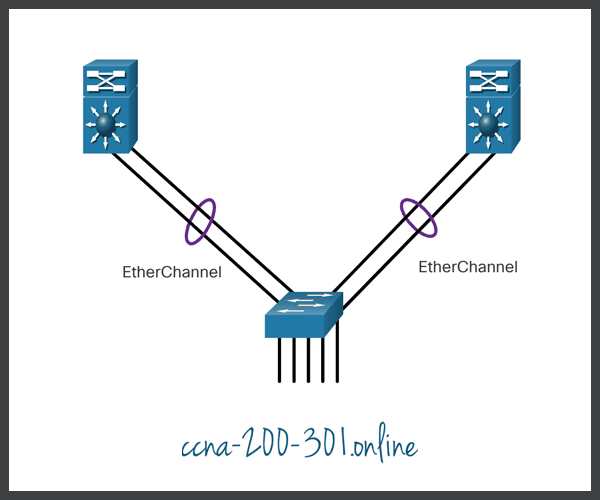

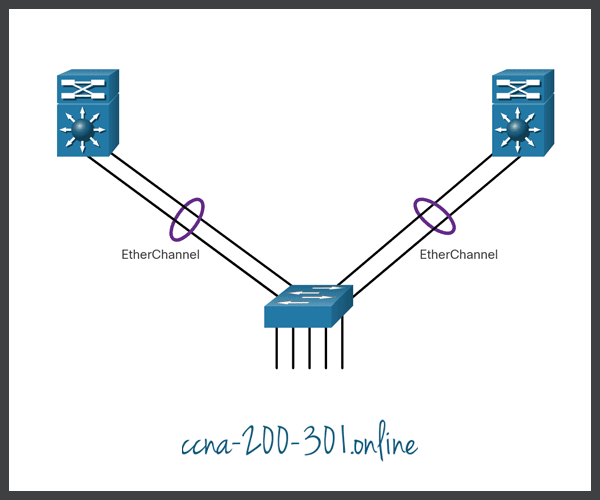

In hierarchical network design, some links between access and distribution switches may need to process a greater amount of traffic than other links. As traffic from multiple links converges onto a single, outgoing link, it is possible for that link to become a bottleneck. Link aggregation, such as EtherChannel, allows an administrator to increase the amount of bandwidth between devices by creating one logical link made up of several physical links.

EtherChannel uses the existing switch ports. Therefore, additional costs to upgrade the link to a faster and more expensive connection are not necessary. The EtherChannel is seen as one logical link using an EtherChannel interface. Most configuration tasks are done on the EtherChannel interface, instead of on each individual port, ensuring configuration consistency throughout the links. Finally, the EtherChannel configuration takes advantage of load balancing between links that are part of the same EtherChannel, and depending on the hardware platform, one or more load-balancing methods can be implemented.

Expand the Access Layer

The network must be designed to be able to expand network access to individuals and devices, as needed. An increasingly important option for extending access layer connectivity is through wireless. Providing wireless connectivity offers many advantages, such as increased flexibility, reduced costs, and the ability to grow and adapt to changing network and business requirements.

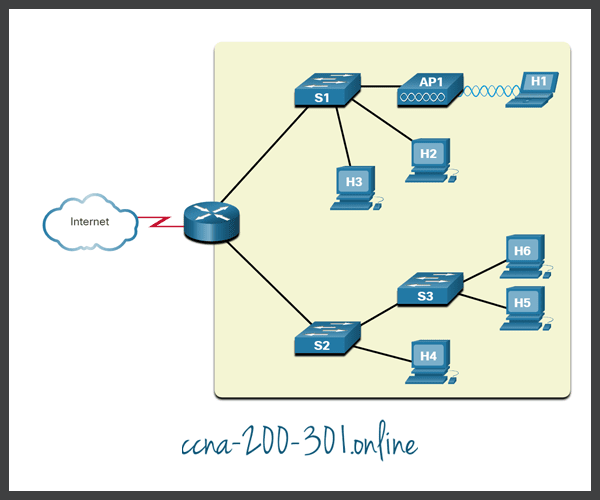

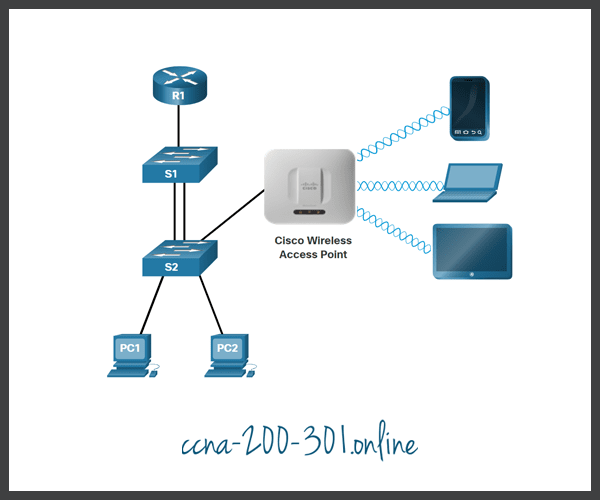

To communicate wirelessly, end devices require a wireless NIC that incorporates a radio transmitter/receiver and the required software driver to make it operational. Additionally, a wireless router or a wireless access point (AP) is required for users to connect, as shown in the figure.

There are many considerations when implementing a wireless network, such as the types of wireless devices to use, wireless coverage requirements, interference considerations, and security considerations.

Tune Routing Protocols

Advanced routing protocols, such as Open Shortest Path First (OSPF), are used in large networks.

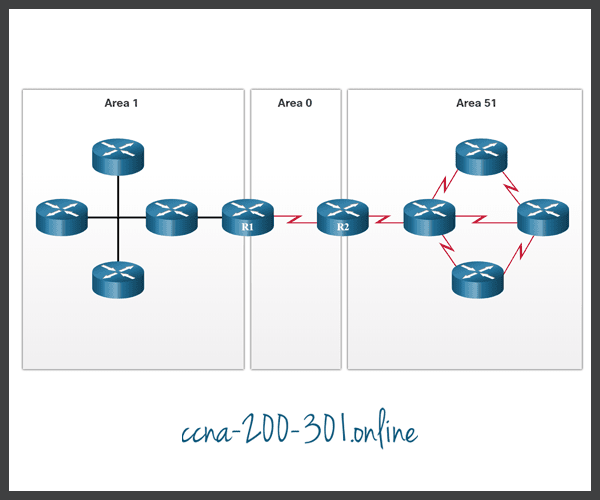

OSPF is a link-state routing protocol. As shown in the figure, OSPF works well for larger hierarchical networks where fast convergence is important. OSPF routers establish and maintain neighbor adjacencies with other connected OSPF routers. OSPF routers synchronize their link-state database. When a network change occurs, link-state updates are sent, informing other OSPF routers of the change and establishing a new best path, if one is available.

Ready to go! Keep visiting our networking course blog, give Like to our fanpage; and you will find more tools and concepts that will make you a networking professional.